As the amount of data available on a daily basis increases, high-performance computers must strengthen their ability to communicate data quickly to a network, to parts and components within computers, and within data centers.

In this blog, you’ll learn more about how NICs, DPUs, and IPUs help ensure safe, quick, and flexible networking and processing, improve infrastructure and resource management, and preserve functionality.

TABLE OF CONTENTS

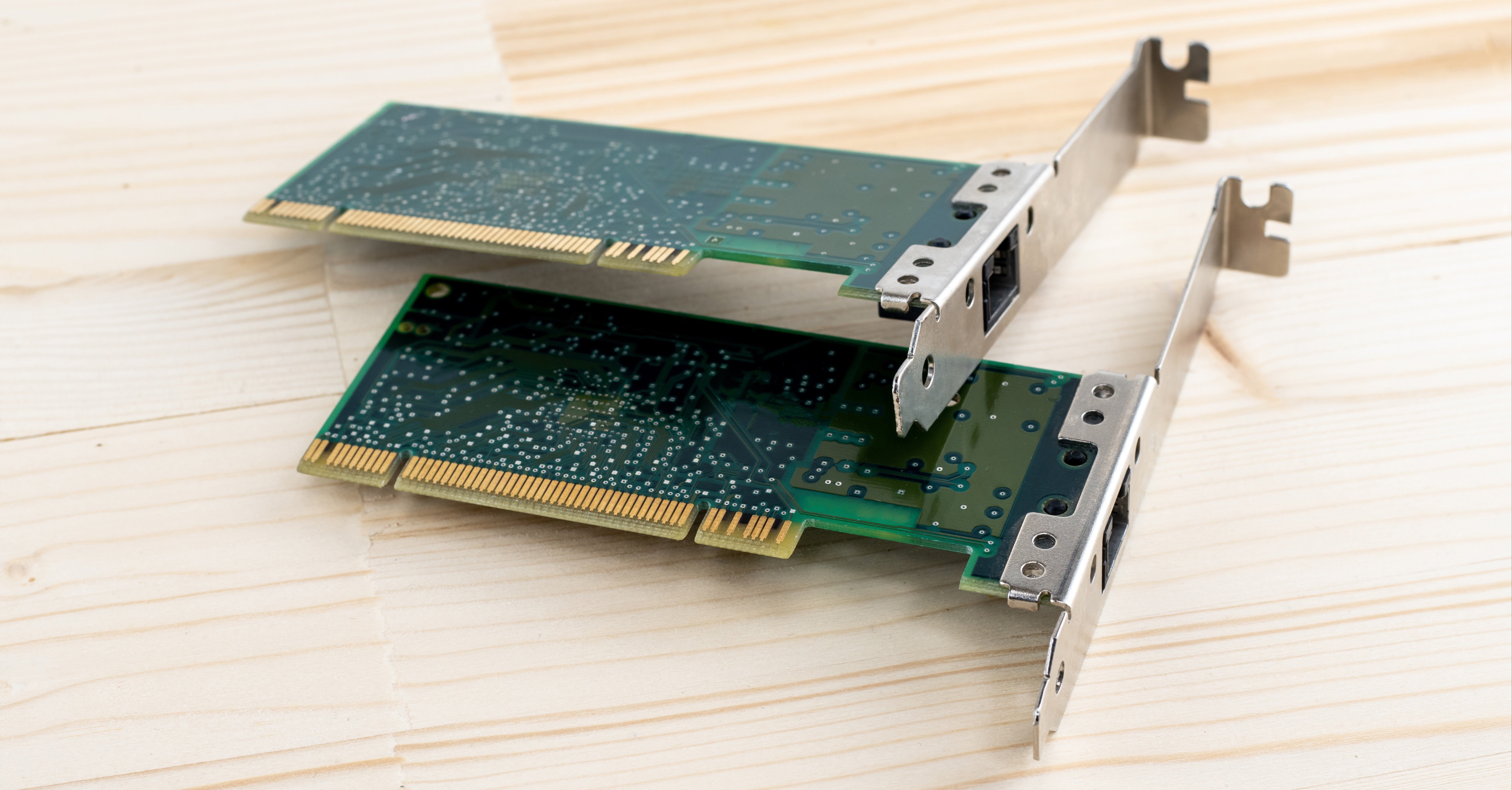

NIC Card (Network Interface Card)

What is a NIC card?

A NIC card (network interface card), also known as a network adaptor or network interface controller, is a circuit board that is installed on a computer to connect to a network.

A NIC card works as an indispensable component for the network connection of computers. Currently, NIC cards designed as a built in style are commonly found in most computers and some network servers.

How does a NIC card work?

Operating as an interface, a NIC card can transmit signals at the physical layer and deliver data packets at the network layer.

Irrespective of location, the NIC card acts as a middleman between a computer or server and a data network.

When a user requests a web page, the LAN (local area network) card gets data from the user device, sends it to the server via the Internet, and gets the required data back from the Internet to display for users.

SmartNIC

What is SmartNIC?

A SmartNIC is a programmable accelerator that makes data center networking, security, and storage efficient and flexible.

SmartNICs offload a growing array of tasks from server CPUs needed to manage modern distributed applications.

They consist of a variety of connected, often configurable units. These silicon blocks act like a committee that decides how to process and route packets of data as they flow through the data center.

How does a SmartNIC work?

Most of these blocks are highly specialized hardware units called accelerators that run communications jobs more efficiently than CPUs.

Some are flexible units that users can program to handle their changing needs and keep up with network protocols as they evolve.

This combination of accelerators and programmable cores help SmartNICs deliver both performance and flexibility with outstanding price performance.

DPU (Data Processing Unit)

What is a DPU?

A DPU (data processing unit) is a new programmable processor that helps move data around data centers, joining CPUs and GPUs as the third major component of a computing architecture.

In essence, they enable more efficient storage and free up the CPU to focus on processing.

The DPU offloads networking and communication tasks from the CPU. It combines processing cores with hardware accelerator blocks and a high-performance network interface to handle data-centric workloads

This enables the DPU to make sure the right data goes to the right place in the right format quickly.

How does a DPU work?

At a high level, a DPU has three primary functions: processing, networking, and acceleration. (It can also be incorporated into SmartNICs.)

A DPU is system on a chip (SoC) that combines three key elements:

- An industry-standard, high-performance, software-programmable, multi-core CPU, typically based on the Arm architecture (a form of reduced instruction set computing, RISC) and tightly coupled with the other SoC components.

- A high-performance network interface capable of parsing, processing, and efficiently transferring data at line rate, or the speed of the network, to GPUs and CPUs.

- A rich set of flexible and programmable acceleration engines that offload and improve applications performance for AI and machine learning, security, telecommunications, and storage, among others.

An IPU (Infrastructure Processing Unit) is a programmable networking device designed to enable cloud and communication service providers to reduce overhead and free up performance for CPUs.

This allows customers to better utilize resources with a secure, programmable, and scalable solution that enables them to balance processing and storage.

An IPU intelligently manages system-level infrastructure resources by securely accelerating those functions in a data center, enabling customers to entirely control the functions of the CPU and system memory.

It also allows cloud operators to transition to a fully virtualized storage and network architecture while maintaining high performance and predictability, as well as a high degree of control.

At Trenton, our engineers equip our systems with the ability to expand their networking and processing capabilities without overload to maximize efficiency and reduce total cost of ownership.

We ruggedize NIC cards, DPUs, and IPUs for the edge, enabling high-performance computing in the harshest of communications-denied and contested environments.

This improved functionality greatly accelerates data and signal delivery speeds, allowing for intelligent management of critical infrastructure and allocation of resources.

Our partnerships with tech giants like Intel and NVIDIA allows us to provide USA-made, cutting-edge products customized to customers’ current interests, challenges, and requirements with the latest technologies before they become publicly available.

With enhanced computing capabilities, our solutions provide immediate, actionable insights to help navigate an increasingly complex digital ecosystem at the strategic, tactical, and operational levels.

Users Today : 2

Users Today : 2 Users Yesterday : 4

Users Yesterday : 4 Users Last 7 days : 33

Users Last 7 days : 33 Users Last 30 days : 158

Users Last 30 days : 158 Users This Month : 104

Users This Month : 104 Users This Year : 1840

Users This Year : 1840 Total Users : 4452

Total Users : 4452 Views Today : 9

Views Today : 9 Views Yesterday : 14

Views Yesterday : 14 Views Last 7 days : 127

Views Last 7 days : 127 Views Last 30 days : 461

Views Last 30 days : 461 Views This Month : 338

Views This Month : 338 Views This Year : 4453

Views This Year : 4453 Total views : 11840

Total views : 11840 Who's Online : 0

Who's Online : 0